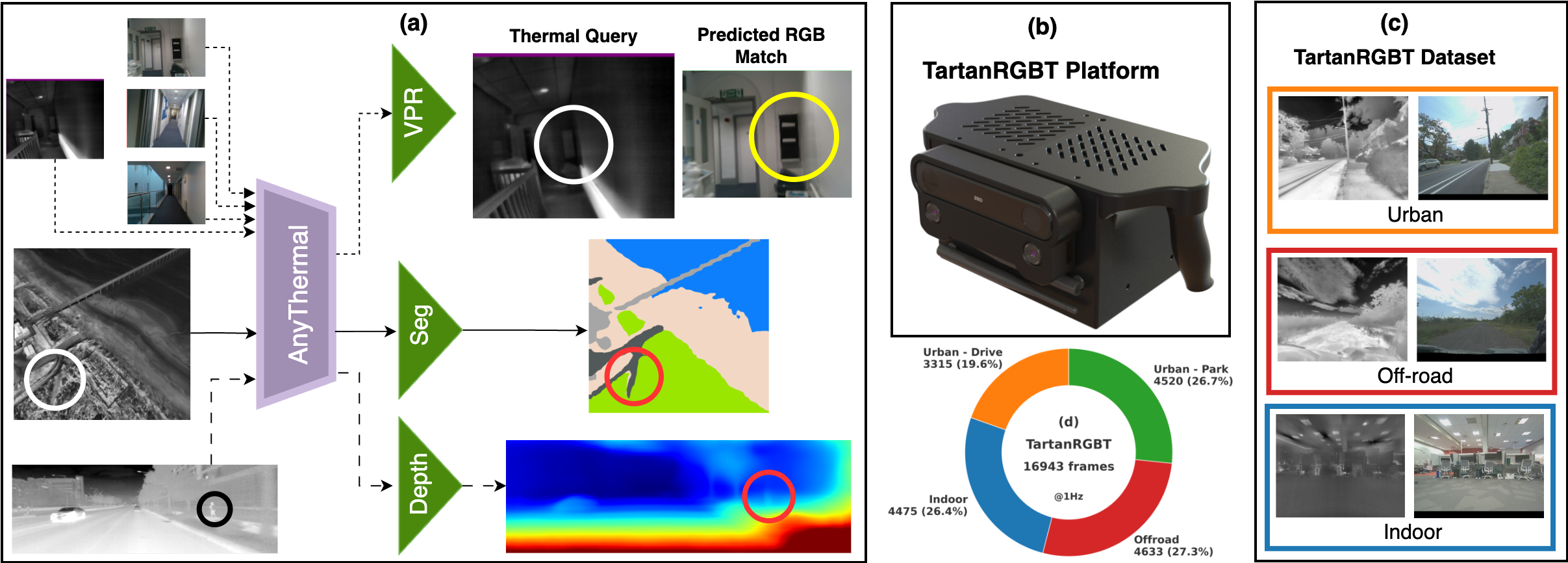

- AnyThermal Backbone: A task-agnostic thermal feature extractor developed through knowledge distillation from visual foundation models. When paired with lightweight heads, it achieves state-of-the-art results in thermal segmentation and cross-modal place recognition, while outperforming comparable RGB-based backbones in monocular thermal depth estimation.

- TartanRGBT Platform: The first open-source, hardware-synchronized framework for simultaneous stereo RGB and thermal image acquisition. By releasing CAD files and a complete software stack, the platform lowers the barrier to large-scale RGB–thermal data collection.

- TartanRGBT Dataset: A balanced collection of 16,943 synchronized RGB–thermal pairs spanning urban, indoor, off-road, and aerial environments. This diverse dataset substantially improves the generalization and downstream performance of thermal perception models.

AnyThermal: Towards Learning Universal Representations for Thermal Perception

TL;DR: AnyThermal is a task-agnostic backbone achieving state-of-the-art results in thermal place recognition, segmentation, and depth estimation by distilling visual foundation models. Supporting this is the TartanRGBT platform, the first open-source framework for hardware-synchronized RGB–thermal data collection. We leverage this to provide the TartanRGBT dataset—16,943 diverse RGB–thermal pairs across urban, indoor, off-road, and park environments.

Abstract

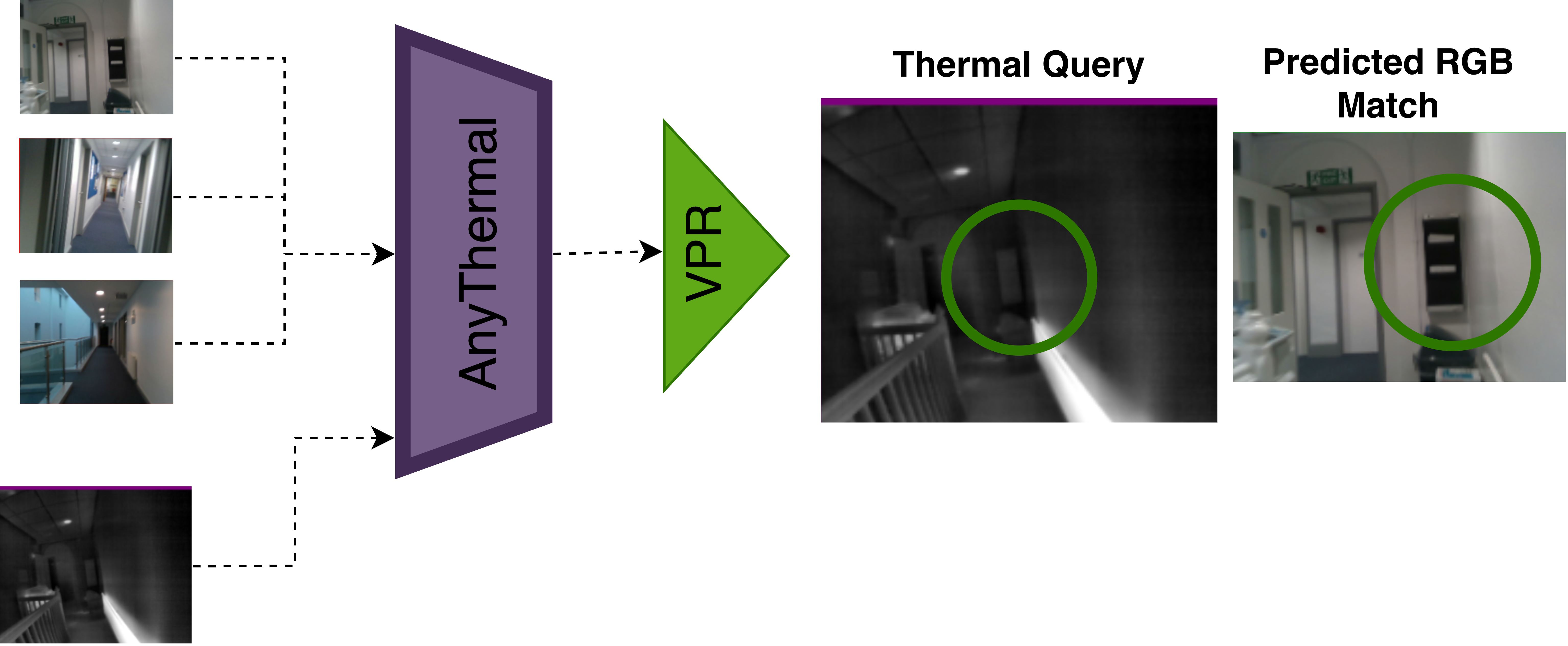

We present AnyThermal, a thermal backbone that captures robust, task-agnostic thermal features suitable for a variety of tasks such as cross-modal place recognition, thermal segmentation, and monocular depth estimation using thermal images. Existing thermal backbones that follow task-specific training from small-scale data result in utility limited to a specific environment and task. Unlike prior methods, AnyThermal can be used across a wide range of environments (indoor, aerial, off-road, urban) and tasks without task-specific training. Our key insight is to distill feature representations from visual foundation models such as DINOv2 into a thermal encoder using data collected across these environments. To bridge the diversity gap in existing RGB–thermal datasets, we introduce the TartanRGBT platform, the first open-source data collection framework with hardware-synchronized RGB–thermal image acquisition. Using this payload, we collect the TartanRGBT dataset, a diverse and balanced dataset spanning four environments. We demonstrate the effectiveness of AnyThermal and TartanRGBT, achieving state-of-the-art results with improvements of up to 36% across diverse environments and downstream tasks.

Key Contributions

AnyThermal Backbone

RGB–Thermal Distillation

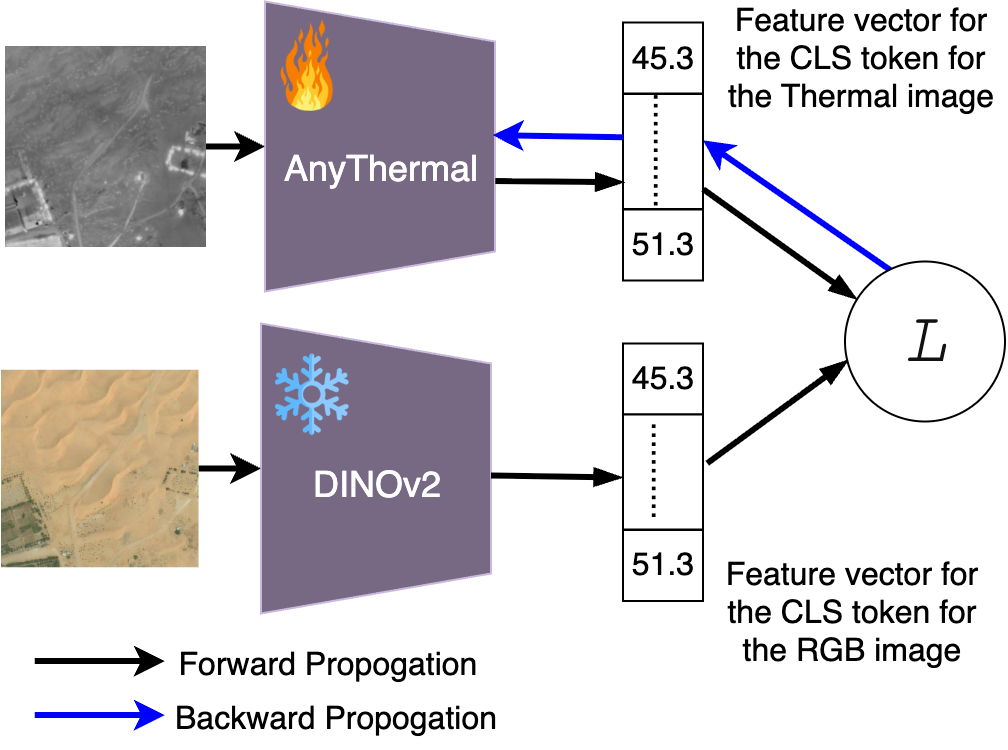

AnyThermal uses two ViT-B/14 DINOv2 encoders: a frozen RGB teacher and a trainable thermal student, both initialized with pre-trained RGB weights.

Thermal images are converted to three channels and passed through the student; a contrastive loss aligns the CLS-token embeddings of corresponding RGB–thermal pairs, encouraging shared global semantics while relaxing pixel-perfect alignment.

Distillation is performed across multiple RGB–thermal datasets, enabling the backbone to learn environment-agnostic thermal representations that generalize across tasks and domains. In particular, AnyThermal is trained using five datasets spanning diverse environments, including urban driving datasets such as ViVID++ (outdoor sequences), STheREo, Freiburg, and TartanRGBT (ours); the Boson Nighttime Dataset for aerial scenarios; and TartanRGBT (ours) for both indoor and off-road environments.

Task-agnostic knowledge distillation from a frozen RGB DINOv2 teacher to a trainable thermal student (AnyThermal), enabling label-free learning of generalizable thermal features across environments.

Task Heads (Frozen Backbone)

- Cross-modal Place Recognition: SALAD head over AnyThermal features with triplet loss.

- Thermal Segmentation: a lightweight two-layer MLP head over ViT patch embeddings.

- Mono-thermal Depth: MiDaS-style encoder–decoder using multi-scale AnyThermal features.

TartanRGBT Platform

CAD model and wiring overview of the handheld TartanRGBT platform.

Camera and sensor placement in the TartanRGBT platform.

The TartanRGBT platform is a handheld rig that captures synchronized stereo RGB, stereo thermal, and IMU at 30 Hz using a ZED X camera, two FLIR Boson 640+ thermal cameras, and an NVIDIA Jetson AGX Orin 64 GB computer.

All cameras are hardware-timed: the ZED X pair is factory-synced, and a trigger from the capture card synchronizes the thermal cameras via external sync pins in slave mode.

A custom 3D-printed enclosure with ergonomic handles, cooling fans, and exposed ports makes the system field-ready, while Docker-based auto-launch and a single recording button simplify operation.

Calibration & Registration

- Thermal intrinsics are calibrated using a custom heated checkerboard, followed by fisheye rectification of thermal images.

- Extrinsics between RGB and thermal cameras are derived from the CAD model, enabling 3D-aware alignment.

- Registered RGB–thermal pairs are produced by estimating dense depth with FoundationStereo, transforming points into the thermal frame, and projecting with thermal intrinsics.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| α = 0.00 | α = 0.25 | α = 0.50 | α = 0.75 | α = 1.00 |

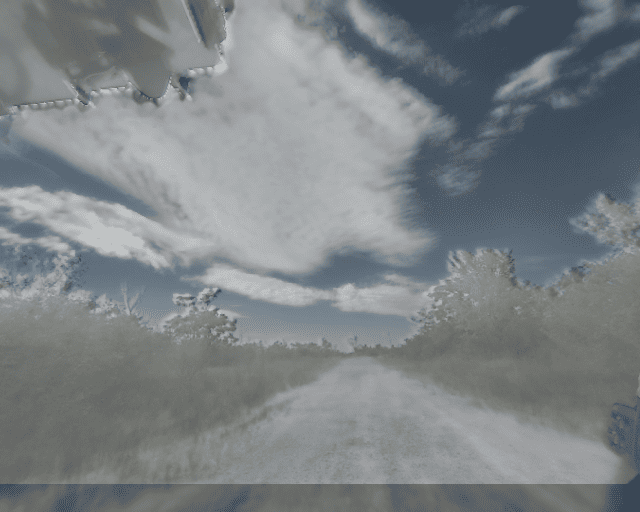

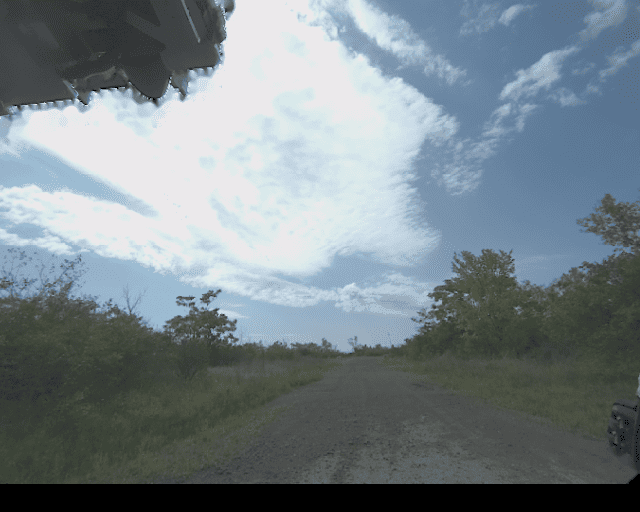

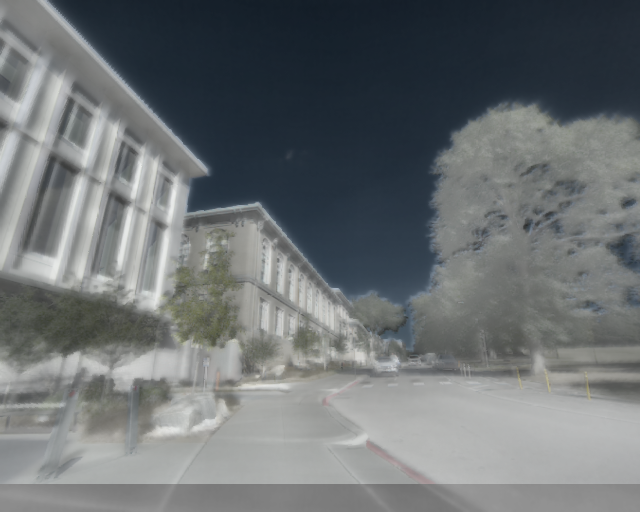

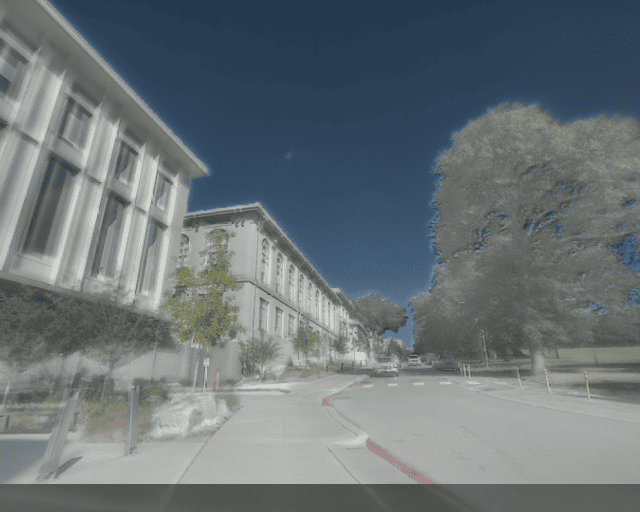

Alpha-blended RGB–thermal overlays across different scenes (α controls the transparency of the thermal image over the RGB image), showing pixel-wise alignment.

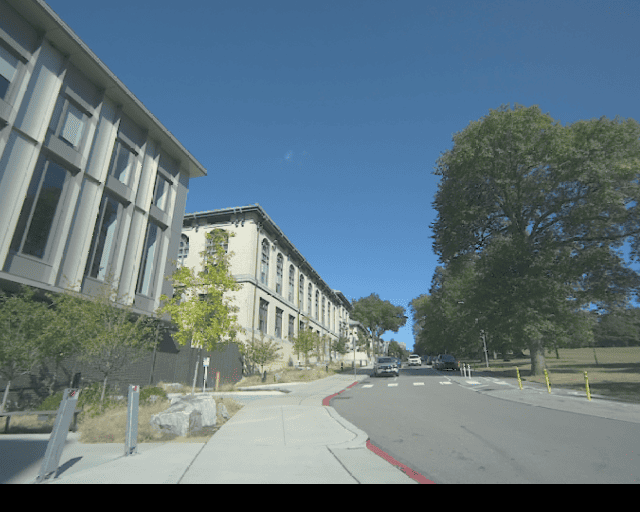

TartanRGBT Dataset

Indoor

Parks

Off-road

Urban

The TartanRGBT dataset consists of 16,943 synchronized, registered RGB–thermal pairs sampled at 1 Hz for non-redundant distillation, covering indoor, urban driving, parks, and off-road environments.

Each sequence includes stereo RGB, stereo thermal, IMU, and thermal FFC status. We will also release the data as ROS bag files, allowing users to extract synchronized sensor streams at their desired sampling frequency.

Diversity Compared to Existing RGB–T Datasets

| Dataset | Platform | RGB–T Pairs @1 Hz | Sync | Registered | Indoor | Off-road | Aerial | Urban Drive | Urban Park |

|---|---|---|---|---|---|---|---|---|---|

| MS2 | Vehicle | 16,215 | Yes | No | No | No | No | Yes | No |

| ViVID++ | Handheld/Vehicle | 14,824 | Mixed | No | Limited | No | Yes | Yes | No |

| CART | Handheld/Drone | 9,678 | Mixed | Yes | No | Yes | Yes | Yes | No |

| OdomBeyondVision | Drone/UGV/Handheld | 7,129 | Yes | No | Yes | No | No | No | No |

| TartanRGBT (Ours) | Handheld | 16,943 | Yes | Yes | Yes | Yes | No | Yes | Yes |

Results Across Tasks

Cross-Modal Place Recognition

AnyThermal with a SALAD head (AnyThermal-VPR) surpasses RGB-only and RGB–thermal baselines on MS2 (urban), CART (aerial), and OdomBeyondVision (indoor) in Recall@1.

| Model | Backbone | Head | MS2 R@1 | CART R@1 | OBV R@1 |

|---|---|---|---|---|---|

| DINOv2 | DINOv2 | CLS | 27.21 | 25.98 | 29.49 |

| SALAD | DINOv2 | SALAD | 76.97 | 49.38 | 38.94 |

| ImageBind | ViT-H | CLS | 0.79 | 1.13 | 10.25 |

| SGM | ResNet-18 | NetVLAD | 20.02 | 45.59 | 21.05 |

| AnyThermal | AnyThermal | CLS | 75.39 | 45.45 | 45.40 |

| AnyThermal-VPR | AnyThermal | SALAD | 81.11 | 56.00 | 53.17 |

Qualitative Cross-Modal Place Recognition

Middle: The RGB-only baseline retrieves an incorrect database match under significant illumination and appearance changes.

Right: AnyThermal correctly retrieves the true location by leveraging robust thermal representations that are invariant to lighting variations.

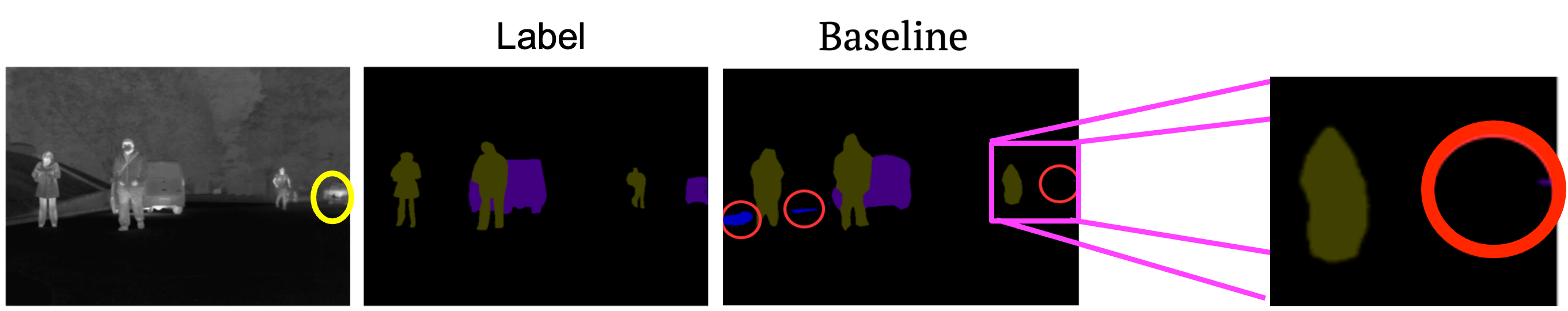

Thermal Segmentation

On MFNet, AnyThermal with a two-layer MLP segmentation head achieves 53.47% mIoU and runs at 6.79 FPS on Orin, outperforming RTFFNet-152 and MCNet while being up to 3.6× faster than the closest competitor.

| Model | Params (M) | mIoU (%) | FPS (Orin) |

|---|---|---|---|

| RTFNet-152 | 196.37 | 47.00 | 8.37 |

| MCNet | 54.65 | 51.95 | 1.88 |

| RGB DINO-SEG | 87.02 | 45.46 | 6.79 |

| AnyThermal-SEG | 87.02 | 53.47 | 6.79 |

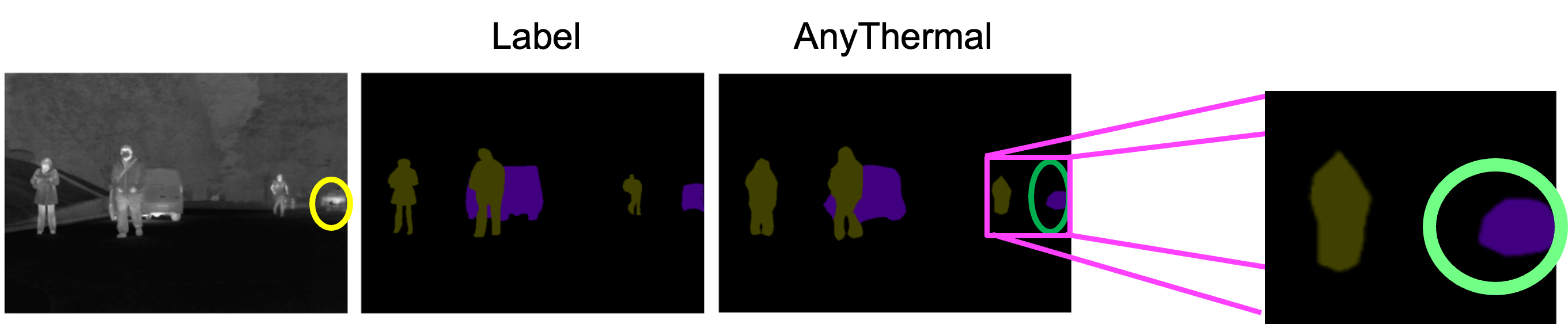

Baseline segmentation vs. AnyThermal prediction.

Improved boundary and class consistency with AnyThermal.

Mono-Thermal Depth Estimation

Plugging AnyThermal into MiDaS on MS2 yields lower AbsRel and RMSE than EfficientNet-Lite3 and RGB DINOv2 backbones.

| Backbone | AbsRel ↓ | SqRel ↓ | RMSE ↓ | RMSElog ↓ |

|---|---|---|---|---|

| EfficientNet-Lite3 | 0.1015 | 0.3955 | 2.9587 | 0.1417 |

| DINOv2 ViT-B/14 | 0.0905 | 0.3177 | 2.7493 | 0.1208 |

| AnyThermal | 0.0883 | 0.3142 | 2.7432 | 0.1182 |

Scaling Data & Diversity

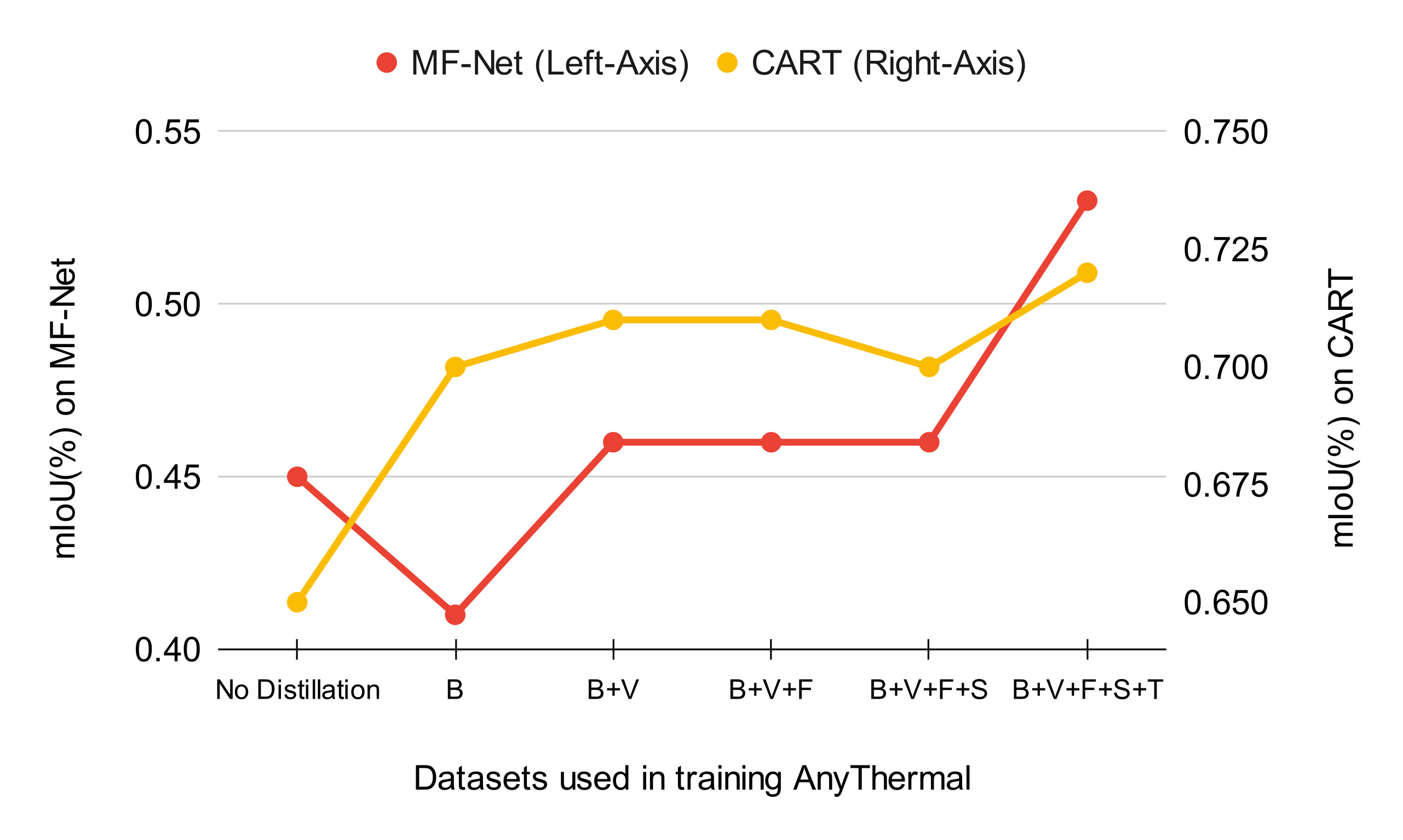

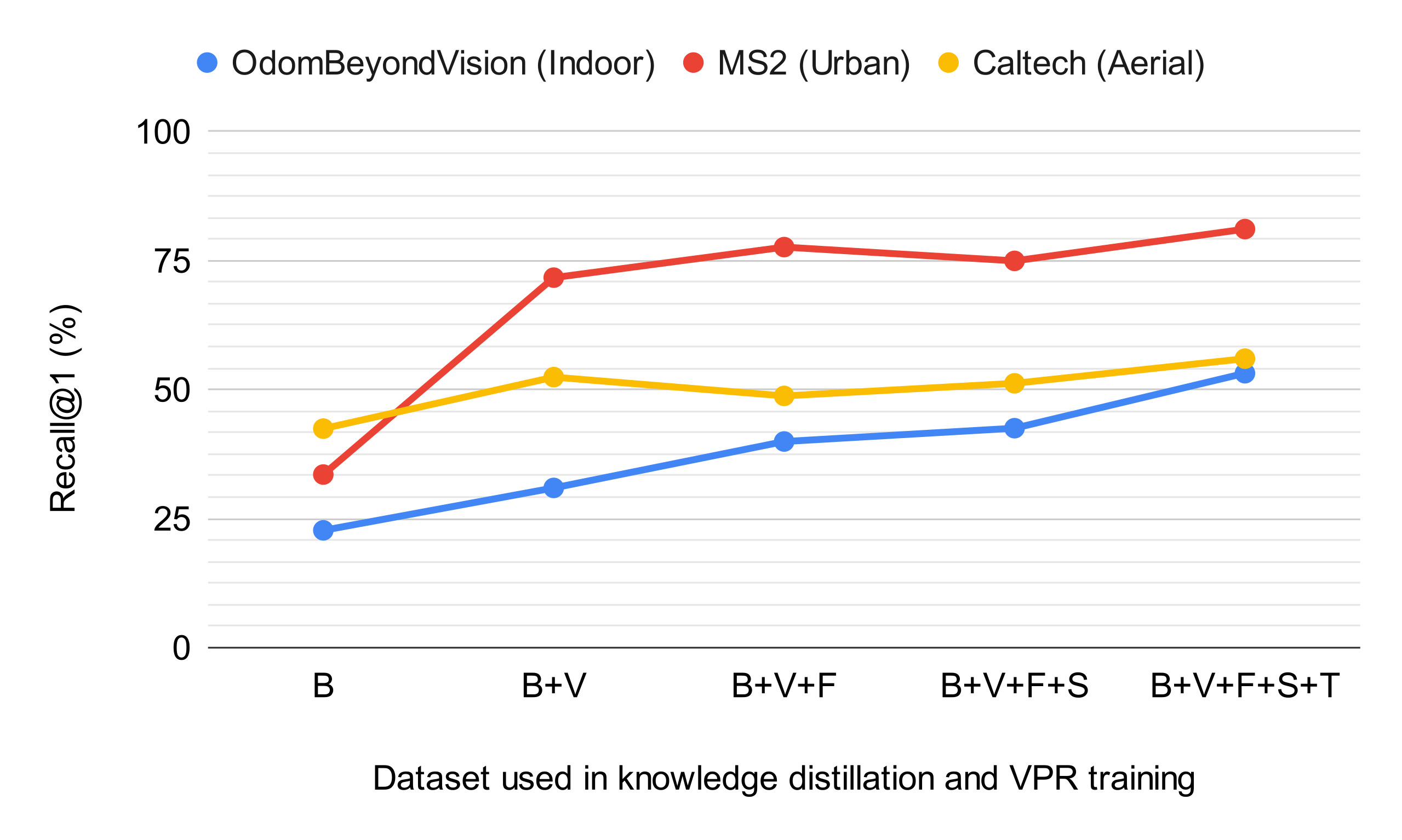

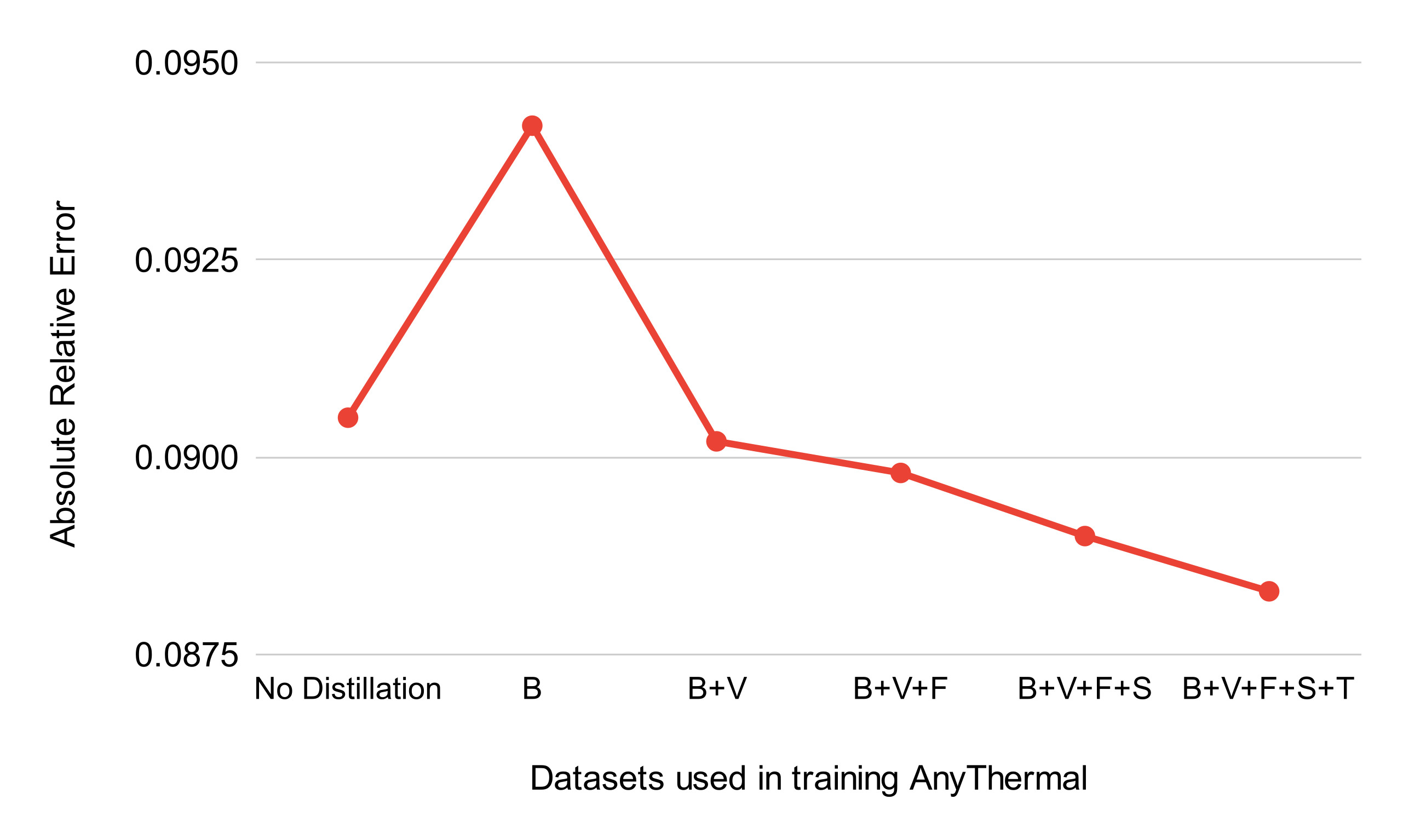

Ablation studies over pre-training datasets show that simply adding more urban data leads to performance saturation. In contrast, incorporating TartanRGBT significantly improves cross-modal place recognition, thermal segmentation, and depth estimation across domains.

The observed trends indicate that AnyThermal has not yet plateaued with the current scale of RGB–thermal data, suggesting further gains from broader and more diverse data collection.

Training on a single aerial dataset introduces domain bias, reducing performance in urban scenes while improving results on aerial benchmarks. This highlights the importance of multi-domain RGB–thermal data for learning transferable thermal representations.

Thermal segmentation performance vs. pre-training datasets.

Cross-modal place recognition performance vs. dataset diversity.

Monocular thermal depth estimation error vs. dataset diversity.

BibTex

If you find our repository useful, please cite our paper in your work:

@misc{maheshwari2026anythermallearninguniversalrepresentations,

title={AnyThermal: Towards Learning Universal Representations for Thermal Perception},

author={Parv Maheshwari and Jay Karhade and Yogesh Chawla and Isaiah Adu and Florian Heisen and Andrew Porco and Andrew Jong and Yifei Liu and Santosh Pitla and Sebastian Scherer and Wenshan Wang},

year={2026},

eprint={2602.06203},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2602.06203},

}